Qwen-ASR Local Model Integration

Ensure you have upgraded to version v3.97 or higher.

Simply select the Qwen-ASR (Local) channel in the speech recognition channels. The default options are 0.6B or 1.7B; the latter is more accurate but consumes more resources.

Manual Download Method

The model will be downloaded online automatically upon first use, but you can also download it manually.

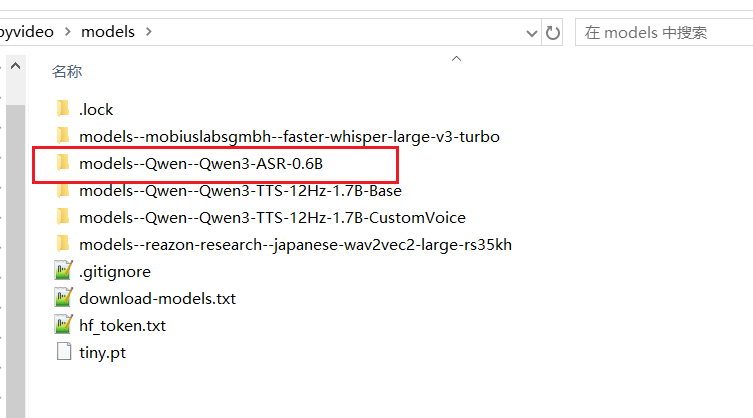

- Check if the

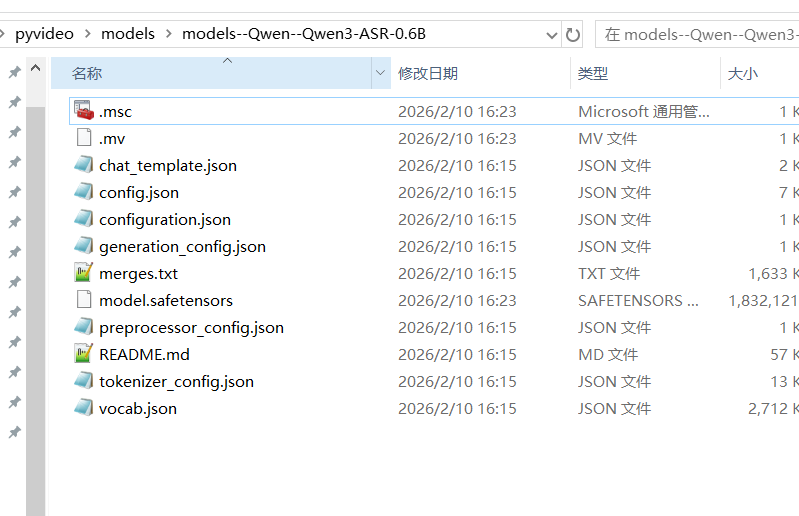

models--Qwen--Qwen3-ASR-0.6Bandmodels--Qwen--Qwen3-ASR-1.7Bfolders exist within themodelsdirectory of the software. If they do not exist, create them. - The

0.6Band1.7Bmodels differ only in size; the download and usage methods are identical. You just need to ensure the different models are placed in their corresponding model folders. - To download the

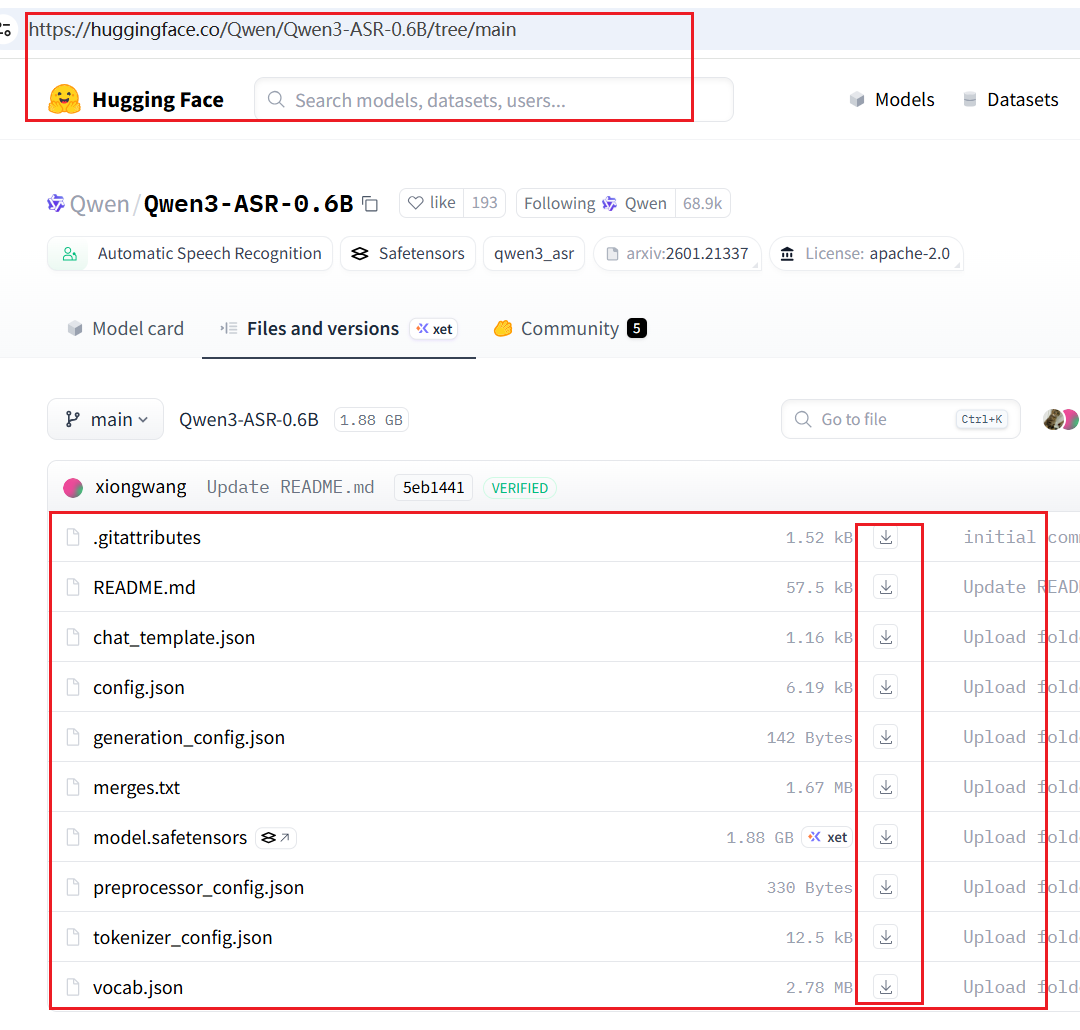

1.7Bmodel: Open https://huggingface.co/Qwen/Qwen3-ASR-1.7B/tree/main, download all files, and place them in themodels/models--Qwen--Qwen3-ASR-1.7Bfolder. - To download the

0.6Bmodel: Open https://huggingface.co/Qwen/Qwen3-ASR-0.6B/tree/main, download all files, and place them in themodels/models--Qwen--Qwen3-ASR-0.6Bfolder. - Select the

0.6Bor1.7Bmodel in the software interface as needed.

Timestamp Alignment

Qwen officially uses the Qwen/Qwen3-ForcedAligner-0.6B model for timestamp alignment by default, which requires passing a single complete long audio file. However, tests have shown that this method consumes a significant amount of VRAM, is relatively slow, and cannot conveniently display transcription progress. For long audio files, this requires staring at an unchanging interface for a long time, leading to confusion about whether the software has frozen.

Therefore, this model is not used for alignment by default. Instead, the ten-vad model is used to pre-slice the audio into short segments before transcription. These are then inferred in batches of 8 segments, reducing VRAM usage while balancing inference speed. The downside is that for audio with fast speech rates, unclear pauses, or noise, the sentence segmentation effect may not be as good as the ForcedAligner. If you wish to use ForcedAligner for alignment, you can deploy from the source code and make the following modifications:

- Modify

software/videotrans/process/stt_fun.py. Change:

def qwen3asr_fun(

cut_audio_list=None,

ROOT_DIR=None,

logs_file=None,

defaulelang="en",

is_cuda=False,

audio_file=None,

TEMP_ROOT=None,

model_name="1.7B",

device_index=0 # gpu索引

):to:

def qwen3asr_fun_bak(

cut_audio_list=None,

ROOT_DIR=None,

logs_file=None,

defaulelang="en",

is_cuda=False,

audio_file=None,

TEMP_ROOT=None,

model_name="1.7B",

device_index=0 # gpu索引

):- In the same

stt_fun.pyfile, proceed to change:

def qwen3asr_fun0(

ROOT_DIR=None,

logs_file=None,

defaulelang="en",

is_cuda=False,

audio_file=None,

TEMP_ROOT=None,

model_name="1.7B",

device_index=0 # gpu索引

):to:

def qwen3asr_fun(

ROOT_DIR=None,

logs_file=None,

defaulelang="en",

is_cuda=False,

audio_file=None,

TEMP_ROOT=None,

model_name="1.7B",

device_index=0 # gpu索引

):- Next, open the

software_directory/videotrans/recognition/_qwenasrlocal.pyfile. Remove the#symbol before the following code:

#tools.check_and_down_ms('Qwen/Qwen3-ForcedAligner-0.6B',callback=self._process_callback,local_dir=f'{config.ROOT_DIR}/models/models--Qwen--Qwen3-ForcedAligner-0.6B')

#tools.check_and_down_hf(model_id='Qwen3-ForcedAligner-0.6B',repo_id='Qwen/Qwen3-ForcedAligner-0.6B',local_dir=f'{config.ROOT_DIR}/models/models--Qwen--Qwen3-ForcedAligner-0.6B',callback=self._process_callback)This enables automatic downloading of the alignment model. Alternatively, you can manually download it from https://huggingface.co/Qwen/Qwen3-ForcedAligner-0.6B/tree/main following the same method as above, and place all files in the models/models--Qwen--Qwen3-ForcedAligner-0.6B folder.

- Still in the

_qwenasrlocal.pyfile:

Change the code return jsdata#self.segmentation_asr_data(jsdata) to return self.segmentation_asr_data(jsdata).

- Restart the software after completing the modifications.